在 TensorFlow.org 上查看 在 TensorFlow.org 上查看 |

在 Google Colab 中运行 在 Google Colab 中运行 |

在 Github 上查看源代码 在 Github 上查看源代码 |

下载笔记本 下载笔记本 |

此笔记本演示如何在使用结构时间序列 (STS) 模型进行拟合与预测时,使用 TFP 近似推断工具合并(非高斯)观测模型。在本例中,我们将使用泊松观测模型来处理离散计数数据。

import time

import matplotlib.pyplot as plt

import numpy as np

import tensorflow.compat.v2 as tf

import tensorflow_probability as tfp

from tensorflow_probability import bijectors as tfb

from tensorflow_probability import distributions as tfd

tf.enable_v2_behavior()

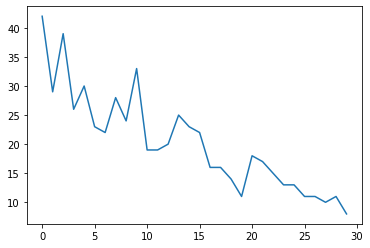

合成数据

首先,我们将生成一些合成计数数据:

num_timesteps = 30

observed_counts = np.round(3 + np.random.lognormal(np.log(np.linspace(

num_timesteps, 5, num=num_timesteps)), 0.20, size=num_timesteps))

observed_counts = observed_counts.astype(np.float32)

plt.plot(observed_counts)

[<matplotlib.lines.Line2D at 0x7f940ae958d0>]

模型

我们将指定一个具有随机游动线性趋势的简单模型:

def build_model(approximate_unconstrained_rates):

trend = tfp.sts.LocalLinearTrend(

observed_time_series=approximate_unconstrained_rates)

return tfp.sts.Sum([trend],

observed_time_series=approximate_unconstrained_rates)

该模型将不在观测的时间序列上进行运算,而是在决定观测值的泊松率参数序列上进行运算。

由于泊松率必须为正,因此我们使用双射器将实值 STS 模型转换为正值分布。Softplus 转换 \(y = \log(1 + \exp(x))\) 是很自然的选择,因为它对于正值几乎是线性的,但也可以使用其他选择,例如 Exp(将正态随机游动转换为对数正态随机游动)。

positive_bijector = tfb.Softplus() # Or tfb.Exp()

# Approximate the unconstrained Poisson rate just to set heuristic priors.

# We could avoid this by passing explicit priors on all model params.

approximate_unconstrained_rates = positive_bijector.inverse(

tf.convert_to_tensor(observed_counts) + 0.01)

sts_model = build_model(approximate_unconstrained_rates)

为了对非高斯观测模型使用近似推断,我们将 STS 模型编码为 TFP JointDistribution。该联合分布中的随机变量是 STS 模型的参数、隐泊松率的时间序列,以及观测的计数。

def sts_with_poisson_likelihood_model():

# Encode the parameters of the STS model as random variables.

param_vals = []

for param in sts_model.parameters:

param_val = yield param.prior

param_vals.append(param_val)

# Use the STS model to encode the log- (or inverse-softplus)

# rate of a Poisson.

unconstrained_rate = yield sts_model.make_state_space_model(

num_timesteps, param_vals)

rate = positive_bijector.forward(unconstrained_rate[..., 0])

observed_counts = yield tfd.Poisson(rate, name='observed_counts')

model = tfd.JointDistributionCoroutineAutoBatched(sts_with_poisson_likelihood_model)

推断准备

我们希望在给定观测计数的情况下,推断出模型中未观测的量。首先,我们根据观测的计数来确定联合对数密度。

pinned_model = model.experimental_pin(observed_counts=observed_counts)

我们还需要一个约束双射函数来确保推断遵守对 STS 模型参数的约束(例如,比例必须为正)。

constraining_bijector = pinned_model.experimental_default_event_space_bijector()

使用 HMC 进行推断

我们将使用 HMC(具体为 NUTS)从模型参数和隐泊松率的联合后验中进行采样。

这将比使用 HMC 拟合标准 STS 模型要慢得多,因为除了模型的(相对较少的)参数外,我们还必须推断泊松率的整个序列。因此,我们将运行相对较少的步骤;对于推断质量至关重要的应用,增加这些值或运行多个链可能会很有意义。

Sampler configuration

# Allow external control of sampling to reduce test runtimes.

num_results = 500 # @param { isTemplate: true}

num_results = int(num_results)

num_burnin_steps = 100 # @param { isTemplate: true}

num_burnin_steps = int(num_burnin_steps)

首先,我们指定一个采样器,然后使用 sample_chain 运行该采样内核来生成样本。

sampler = tfp.mcmc.TransformedTransitionKernel(

tfp.mcmc.NoUTurnSampler(

target_log_prob_fn=pinned_model.unnormalized_log_prob,

step_size=0.1),

bijector=constraining_bijector)

adaptive_sampler = tfp.mcmc.DualAveragingStepSizeAdaptation(

inner_kernel=sampler,

num_adaptation_steps=int(0.8 * num_burnin_steps),

target_accept_prob=0.75)

initial_state = constraining_bijector.forward(

type(pinned_model.event_shape)(

*(tf.random.normal(part_shape)

for part_shape in constraining_bijector.inverse_event_shape(

pinned_model.event_shape))))

# Speed up sampling by tracing with `tf.function`.

@tf.function(autograph=False, jit_compile=True)

def do_sampling():

return tfp.mcmc.sample_chain(

kernel=adaptive_sampler,

current_state=initial_state,

num_results=num_results,

num_burnin_steps=num_burnin_steps,

trace_fn=None)

t0 = time.time()

samples = do_sampling()

t1 = time.time()

print("Inference ran in {:.2f}s.".format(t1-t0))

Inference ran in 24.83s.

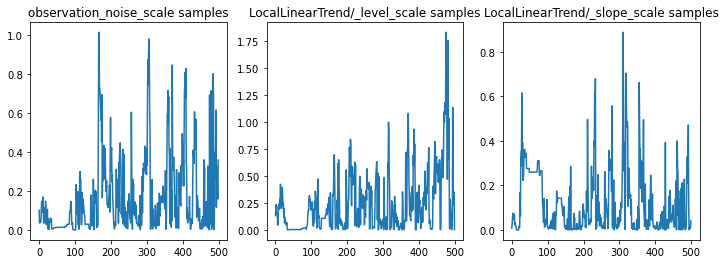

我们可以通过检查参数轨迹对推断进行健全性检查。在本例中,它们看起来已经探索了对数据的多种解释,这很好,但是更多的样本将有助于判断链的混合程度。

f = plt.figure(figsize=(12, 4))

for i, param in enumerate(sts_model.parameters):

ax = f.add_subplot(1, len(sts_model.parameters), i + 1)

ax.plot(samples[i])

ax.set_title("{} samples".format(param.name))

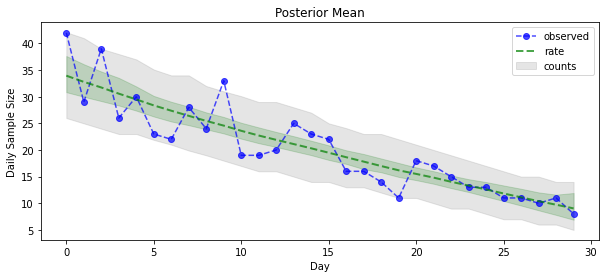

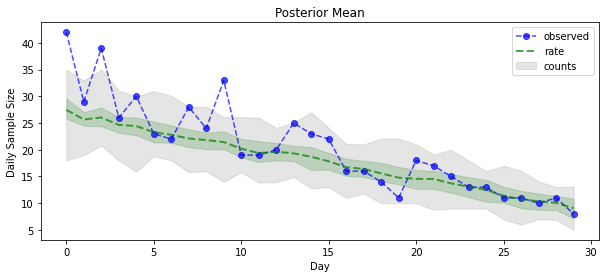

现在是获得回报的时候了:我们来看一下泊松率的后验!我们还将绘制观测计数的 80% 预测区间,并且可以检查此区间是否看起来包含了我们实际观测计数的约 80%。

param_samples = samples[:-1]

unconstrained_rate_samples = samples[-1][..., 0]

rate_samples = positive_bijector.forward(unconstrained_rate_samples)

plt.figure(figsize=(10, 4))

mean_lower, mean_upper = np.percentile(rate_samples, [10, 90], axis=0)

pred_lower, pred_upper = np.percentile(np.random.poisson(rate_samples),

[10, 90], axis=0)

_ = plt.plot(observed_counts, color="blue", ls='--', marker='o', label='observed', alpha=0.7)

_ = plt.plot(np.mean(rate_samples, axis=0), label='rate', color="green", ls='dashed', lw=2, alpha=0.7)

_ = plt.fill_between(np.arange(0, 30), mean_lower, mean_upper, color='green', alpha=0.2)

_ = plt.fill_between(np.arange(0, 30), pred_lower, pred_upper, color='grey', label='counts', alpha=0.2)

plt.xlabel("Day")

plt.ylabel("Daily Sample Size")

plt.title("Posterior Mean")

plt.legend()

<matplotlib.legend.Legend at 0x7f93ffd35550>

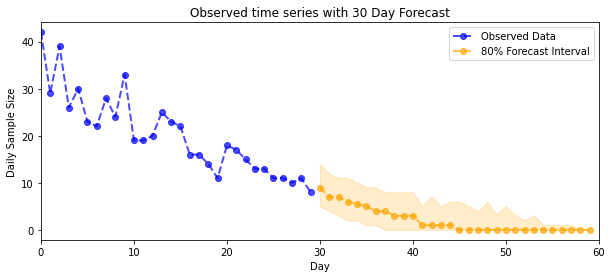

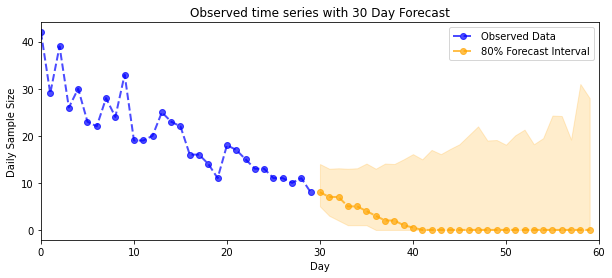

预测

为了预测观测的计数,我们将使用标准 STS 工具在隐泊松率上构建预测分布(同样,在无约束空间中,因为 STS 设计用于对实值数据进行建模),然后通过泊松观测模型来传递采样的预测:

def sample_forecasted_counts(sts_model, posterior_latent_rates,

posterior_params, num_steps_forecast,

num_sampled_forecasts):

# Forecast the future latent unconstrained rates, given the inferred latent

# unconstrained rates and parameters.

unconstrained_rates_forecast_dist = tfp.sts.forecast(sts_model,

observed_time_series=unconstrained_rate_samples,

parameter_samples=posterior_params,

num_steps_forecast=num_steps_forecast)

# Transform the forecast to positive-valued Poisson rates.

rates_forecast_dist = tfd.TransformedDistribution(

unconstrained_rates_forecast_dist,

positive_bijector)

# Sample from the forecast model following the chain rule:

# P(counts) = P(counts | latent_rates)P(latent_rates)

sampled_latent_rates = rates_forecast_dist.sample(num_sampled_forecasts)

sampled_forecast_counts = tfd.Poisson(rate=sampled_latent_rates).sample()

return sampled_forecast_counts, sampled_latent_rates

forecast_samples, rate_samples = sample_forecasted_counts(

sts_model,

posterior_latent_rates=unconstrained_rate_samples,

posterior_params=param_samples,

# Days to forecast:

num_steps_forecast=30,

num_sampled_forecasts=100)

forecast_samples = np.squeeze(forecast_samples)

def plot_forecast_helper(data, forecast_samples, CI=90):

"""Plot the observed time series alongside the forecast."""

plt.figure(figsize=(10, 4))

forecast_median = np.median(forecast_samples, axis=0)

num_steps = len(data)

num_steps_forecast = forecast_median.shape[-1]

plt.plot(np.arange(num_steps), data, lw=2, color='blue', linestyle='--', marker='o',

label='Observed Data', alpha=0.7)

forecast_steps = np.arange(num_steps, num_steps+num_steps_forecast)

CI_interval = [(100 - CI)/2, 100 - (100 - CI)/2]

lower, upper = np.percentile(forecast_samples, CI_interval, axis=0)

plt.plot(forecast_steps, forecast_median, lw=2, ls='--', marker='o', color='orange',

label=str(CI) + '% Forecast Interval', alpha=0.7)

plt.fill_between(forecast_steps,

lower,

upper, color='orange', alpha=0.2)

plt.xlim([0, num_steps+num_steps_forecast])

ymin, ymax = min(np.min(forecast_samples), np.min(data)), max(np.max(forecast_samples), np.max(data))

yrange = ymax-ymin

plt.title("{}".format('Observed time series with ' + str(num_steps_forecast) + ' Day Forecast'))

plt.xlabel('Day')

plt.ylabel('Daily Sample Size')

plt.legend()

plot_forecast_helper(observed_counts, forecast_samples, CI=80)

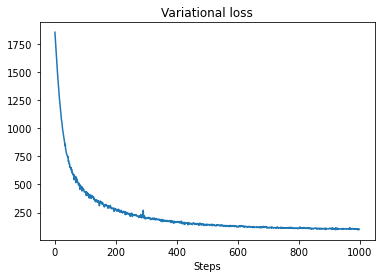

VI 推断

在推断一个完整的时间序列时,变分推断可能会出现问题,比如我们的近似计数(而不是像标准 STS 模型那样只推断一个时间序列的参数)。变量具有独立后验的标准假设是相当错误的,因为每个时间步骤都与其近邻相关,这可能会导致低估不确定度。因此,对于整个时间序列上的近似推断,HMC 可能是更好的选择。但是,VI 的速度要快得多,对于模型原型设计或能够在经验上证明其性能“足够好”的情况下,会非常有用。

为了用 VI 来拟合我们的模型,我们只需构建并优化一个代理后验:

surrogate_posterior = tfp.experimental.vi.build_factored_surrogate_posterior(

event_shape=pinned_model.event_shape,

bijector=constraining_bijector)

# Allow external control of optimization to reduce test runtimes.

num_variational_steps = 1000 # @param { isTemplate: true}

num_variational_steps = int(num_variational_steps)

t0 = time.time()

losses = tfp.vi.fit_surrogate_posterior(pinned_model.unnormalized_log_prob,

surrogate_posterior,

optimizer=tf.optimizers.Adam(0.1),

num_steps=num_variational_steps)

t1 = time.time()

print("Inference ran in {:.2f}s.".format(t1-t0))

Inference ran in 11.37s.

plt.plot(losses)

plt.title("Variational loss")

_ = plt.xlabel("Steps")

posterior_samples = surrogate_posterior.sample(50)

param_samples = posterior_samples[:-1]

unconstrained_rate_samples = posterior_samples[-1][..., 0]

rate_samples = positive_bijector.forward(unconstrained_rate_samples)

plt.figure(figsize=(10, 4))

mean_lower, mean_upper = np.percentile(rate_samples, [10, 90], axis=0)

pred_lower, pred_upper = np.percentile(

np.random.poisson(rate_samples), [10, 90], axis=0)

_ = plt.plot(observed_counts, color='blue', ls='--', marker='o',

label='observed', alpha=0.7)

_ = plt.plot(np.mean(rate_samples, axis=0), label='rate', color='green',

ls='dashed', lw=2, alpha=0.7)

_ = plt.fill_between(

np.arange(0, 30), mean_lower, mean_upper, color='green', alpha=0.2)

_ = plt.fill_between(np.arange(0, 30), pred_lower, pred_upper, color='grey',

label='counts', alpha=0.2)

plt.xlabel('Day')

plt.ylabel('Daily Sample Size')

plt.title('Posterior Mean')

plt.legend()

<matplotlib.legend.Legend at 0x7f93ff4735c0>

forecast_samples, rate_samples = sample_forecasted_counts(

sts_model,

posterior_latent_rates=unconstrained_rate_samples,

posterior_params=param_samples,

# Days to forecast:

num_steps_forecast=30,

num_sampled_forecasts=100)

forecast_samples = np.squeeze(forecast_samples)

plot_forecast_helper(observed_counts, forecast_samples, CI=80)