- Description:

The Places dataset is designed following principles of human visual cognition. Our goal is to build a core of visual knowledge that can be used to train artificial systems for high-level visual understanding tasks, such as scene context, object recognition, action and event prediction, and theory-of-mind inference.

The semantic categories of Places are defined by their function: the labels represent the entry-level of an environment. To illustrate, the dataset has different categories of bedrooms, or streets, etc, as one does not act the same way, and does not make the same predictions of what can happen next, in a home bedroom, an hotel bedroom or a nursery. In total, Places contains more than 10 million images comprising 400+ unique scene categories. The dataset features 5000 to 30,000 training images per class, consistent with real-world frequencies of occurrence. Using convolutional neural networks (CNN), Places dataset allows learning of deep scene features for various scene recognition tasks, with the goal to establish new state-of-the-art performances on scene-centric benchmarks.

Here we provide the Places Database and the trained CNNs for academic research and education purposes.

Homepage: http://places2.csail.mit.edu/

Source code:

tfds.datasets.placesfull.BuilderVersions:

1.0.0(default): No release notes.

Download size:

143.56 GiBDataset size:

136.56 GiBAuto-cached (documentation): No

Splits:

| Split | Examples |

|---|---|

'train' |

10,653,087 |

- Feature structure:

FeaturesDict({

'filename': Text(shape=(), dtype=string),

'image': Image(shape=(256, 256, 3), dtype=uint8),

'label': ClassLabel(shape=(), dtype=int64, num_classes=435),

})

- Feature documentation:

| Feature | Class | Shape | Dtype | Description |

|---|---|---|---|---|

| FeaturesDict | ||||

| filename | Text | string | ||

| image | Image | (256, 256, 3) | uint8 | |

| label | ClassLabel | int64 |

Supervised keys (See

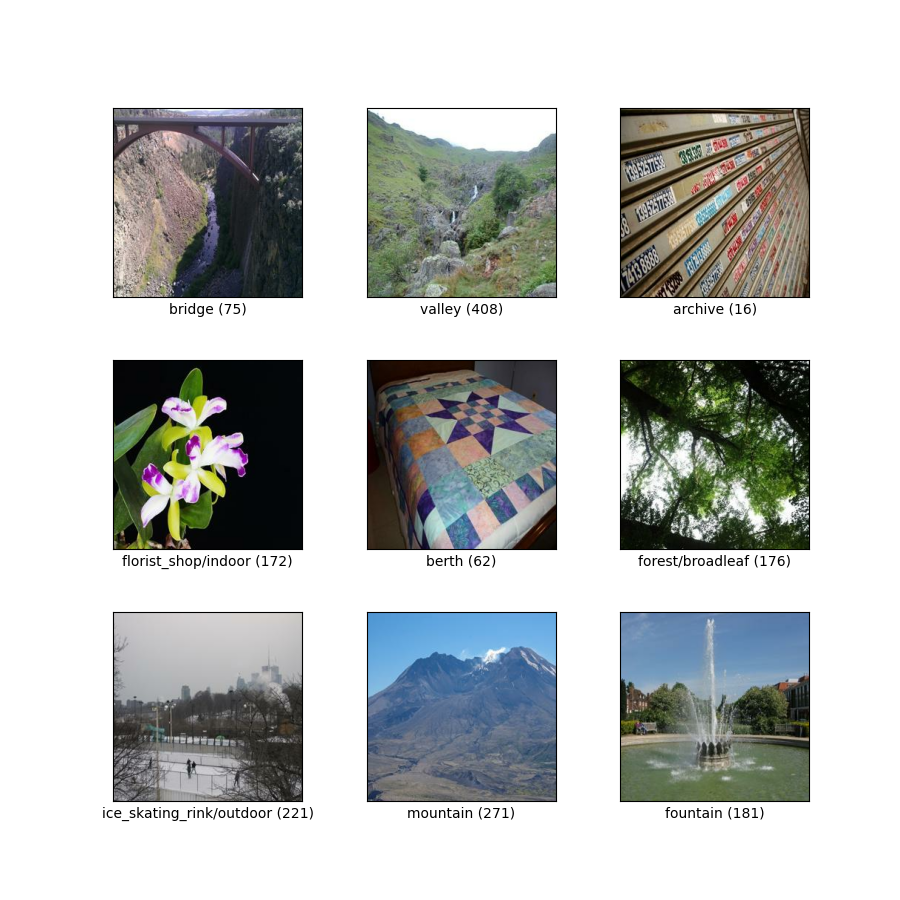

as_superviseddoc):('image', 'label', 'filename')Figure (tfds.show_examples):

- Examples (tfds.as_dataframe):

- Citation:

@article{zhou2017places,

title={Places: A 10 million Image Database for Scene Recognition},

author={Zhou, Bolei and Lapedriza, Agata and Khosla, Aditya and Oliva, Aude and Torralba, Antonio},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

year={2017},

publisher={IEEE}

}