- Description:

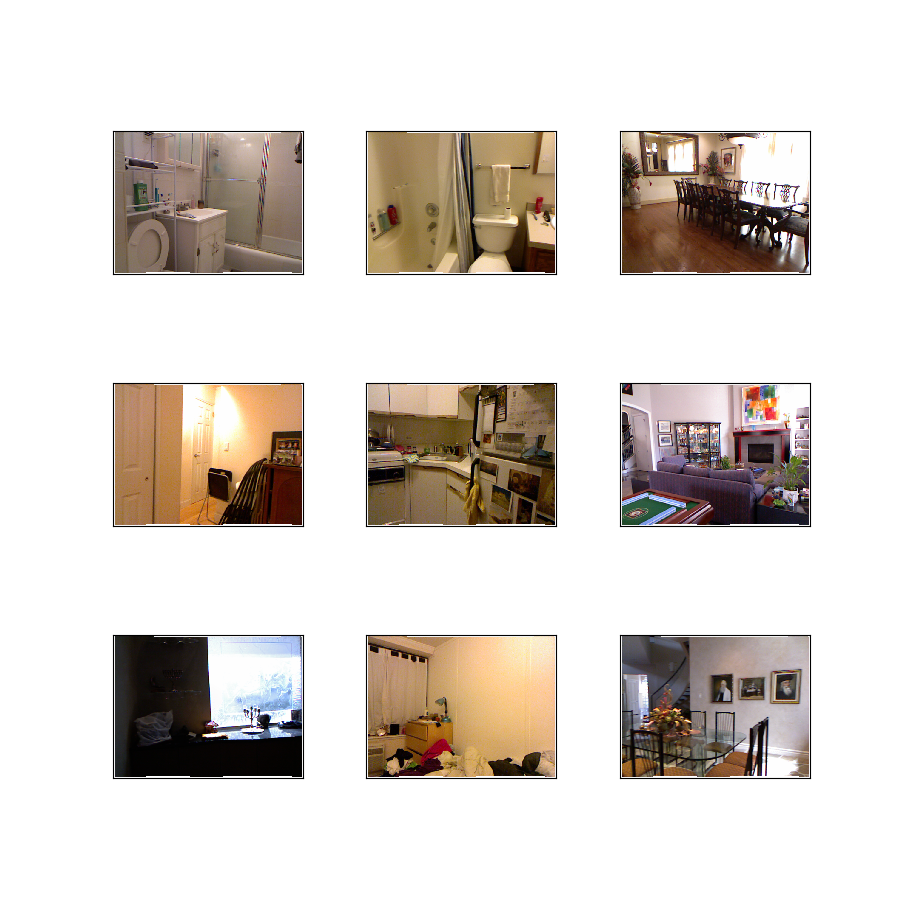

The NYU-Depth V2 data set is comprised of video sequences from a variety of indoor scenes as recorded by both the RGB and Depth cameras from the Microsoft Kinect.

Additional Documentation: Explore on Papers With Code

Homepage: https://cs.nyu.edu/~silberman/datasets/nyu_depth_v2.html

Source code:

tfds.datasets.nyu_depth_v2.BuilderVersions:

0.0.1(default): No release notes.

Download size:

31.92 GiBDataset size:

74.03 GiBAuto-cached (documentation): No

Splits:

| Split | Examples |

|---|---|

'train' |

47,584 |

'validation' |

654 |

- Feature structure:

FeaturesDict({

'depth': Tensor(shape=(480, 640), dtype=float16),

'image': Image(shape=(480, 640, 3), dtype=uint8),

})

- Feature documentation:

| Feature | Class | Shape | Dtype | Description |

|---|---|---|---|---|

| FeaturesDict | ||||

| depth | Tensor | (480, 640) | float16 | |

| image | Image | (480, 640, 3) | uint8 |

Supervised keys (See

as_superviseddoc):('image', 'depth')Figure (tfds.show_examples):

- Examples (tfds.as_dataframe):

- Citation:

@inproceedings{Silberman:ECCV12,

author = {Nathan Silberman, Derek Hoiem, Pushmeet Kohli and Rob Fergus},

title = {Indoor Segmentation and Support Inference from RGBD Images},

booktitle = {ECCV},

year = {2012}

}

@inproceedings{icra_2019_fastdepth,

author = {Wofk, Diana and Ma, Fangchang and Yang, Tien-Ju and Karaman, Sertac and Sze, Vivienne},

title = {FastDepth: Fast Monocular Depth Estimation on Embedded Systems},

booktitle = {IEEE International Conference on Robotics and Automation (ICRA)},

year = {2019}

}